Dive deep into the world of hinge loss, a crucial concept in machine learning and optimization. Learn its applications, benefits, and how to optimize it effectively.

Introduction

In the realm of machine learning and optimization, the term “hinge loss plays a pivotal role. This informative article aims to shed light on the intricacies of hinge loss, its various applications, and strategies for optimizing it to achieve optimal results. Whether you’re a seasoned data scientist or a curious learner, this guide will equip you with valuable insights into this fundamental concept.

Hinge Loss: Unraveling the Basics

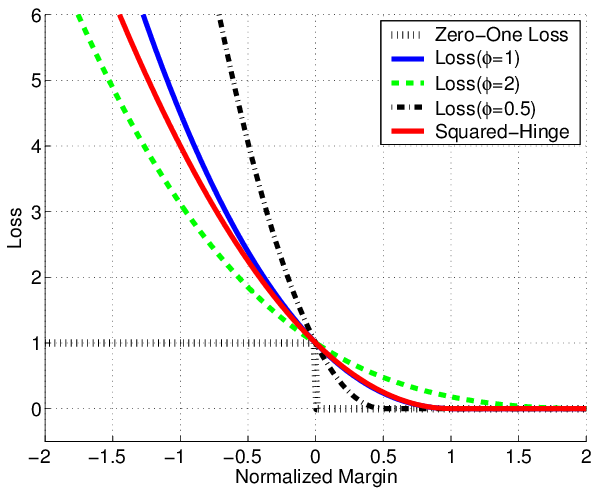

Hinge loss, also known as max-margin loss, is a crucial component in the world of support vector machines (SVMs) and other classification algorithms. It serves as an objective function that aids in the training process by quantifying the margin between classes. The hinge loss function is mathematically defined as:

�(�,�(�))=max(0,1−�⋅�(�))

L(y,f(x))=max(0,1−y⋅f(x))

In this equation,

�

y represents the true label of the data point,

�(�)

f(x) is the decision function’s output, and the function aims to minimize the hinge loss, encouraging correct classification.

Applications of Hinge Loss

Hinge loss finds its applications in various domains, including:

- Image Classification: Hinge loss helps optimize image classification models by minimizing misclassification errors and maximizing class separability.

- Natural Language Processing: In sentiment analysis and text classification, hinge loss aids in fine-tuning models for accurate predictions.

- Bioinformatics: Hinge loss is used in protein structure prediction, assisting in the identification of potential structures based on given data.

- Finance: Support vector machines with hinge loss are utilized for stock market trend prediction and risk assessment.

- Medical Diagnostics: Hinge loss contributes to disease classification based on patient data, enabling accurate diagnoses.

Optimizing Hinge Loss for Maximum Effectiveness

To harness the power of hinge loss, consider these optimization strategies:

- Regularization: Apply regularization techniques such as L1 or L2 regularization to prevent overfitting and enhance model generalization.

- Kernel Tricks: Implement kernel methods to transform data into higher-dimensional spaces, enabling better class separation.

- Hyperparameter Tuning: Fine-tune parameters like the regularization coefficient and kernel parameters to achieve optimal results.

- Feature Engineering: Carefully select and engineer features to improve the model’s ability to capture underlying patterns.

- Data Augmentation: Increase the diversity of your dataset through augmentation techniques, enhancing the model’s robustness.

FAQs

Q: What is the primary purpose of hinge loss? A: Hinge loss aims to quantify the margin between classes, encouraging correct classification in machine learning models.

Q: How does hinge loss differ from other loss functions? A: Hinge loss prioritizes maximizing the margin between classes, making it suitable for support vector machines and classification tasks.

Q: Can hinge loss be used for regression tasks? A: Hinge loss is primarily designed for classification problems and may not be directly applicable to regression tasks.

Q: What is the relationship between support vector machines and hinge loss? A: Hinge loss is the function that SVMs aim to minimize during training to find the optimal hyperplane for classification.

Q: What is the primary purpose of hinge loss? A: Hinge loss aims to quantify the margin between classes, encouraging correct classification in machine learning models.

Q: How does hinge loss differ from other loss functions? A: Hinge loss prioritizes maximizing the margin between classes, making it suitable for support vector machines and classification tasks.

Q: Can hinge loss be used for regression tasks? A: Hinge loss is primarily designed for classification problems and may not be directly applicable to regression tasks.

Q: How can I prevent overfitting when using hinge loss? A: Employ techniques like regularization and cross-validation to prevent overfitting and enhance the generalization of your model.

Q: Are there real-world examples of hinge loss applications? A: Yes, hinge loss is widely used in fields such as image classification, sentiment analysis, and medical diagnostics.

Conclusion

In the realm of machine learning, hinge loss stands as a vital tool for achieving accurate and robust classification models. By understanding its mathematical underpinnings, exploring its versatile applications, and adopting effective optimization techniques, you can leverage hinge loss to unlock the true potential of your data-driven endeavors.

Remember, whether you’re classifying images, predicting stock trends, or diagnosing medical conditions, hinge loss empowers you to make informed decisions and drive impactful results.

Leave a Reply